I hold a Ph.D. from South China University of Technology (advised by Prof. Ping Zhang), with research spanning embodied intelligence, robotic manipulation, active perception, reinforcement learning, and 3D point cloud understanding. I have published 7 first-author papers at IJCAI, AAAI, TNNLS, ICASSP, and ICME (4 Oral). I now work at Astribot as an Embodied Intelligence Algorithm Engineer, building VLA training pipelines and applying RL and world models to dual-arm manipulation.

South China University of Technology, School of Computer Science and Engineering

South China University of Technology, School of Mechanical and Automotive Engineering

Undergraduate studies in mechanical engineering.

Guangdong HYNN Technology Co., Ltd.

Mechanical design and engineering.

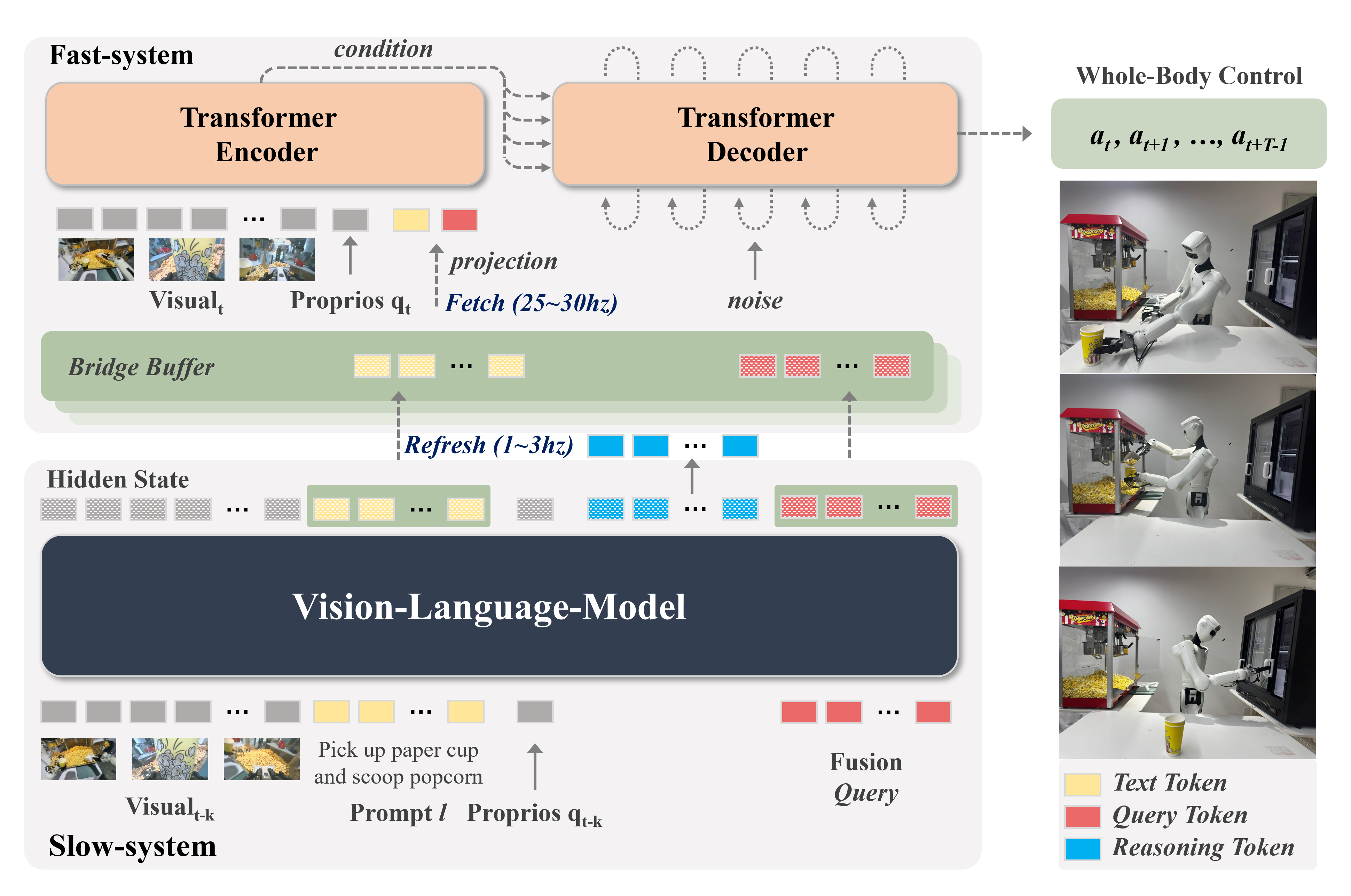

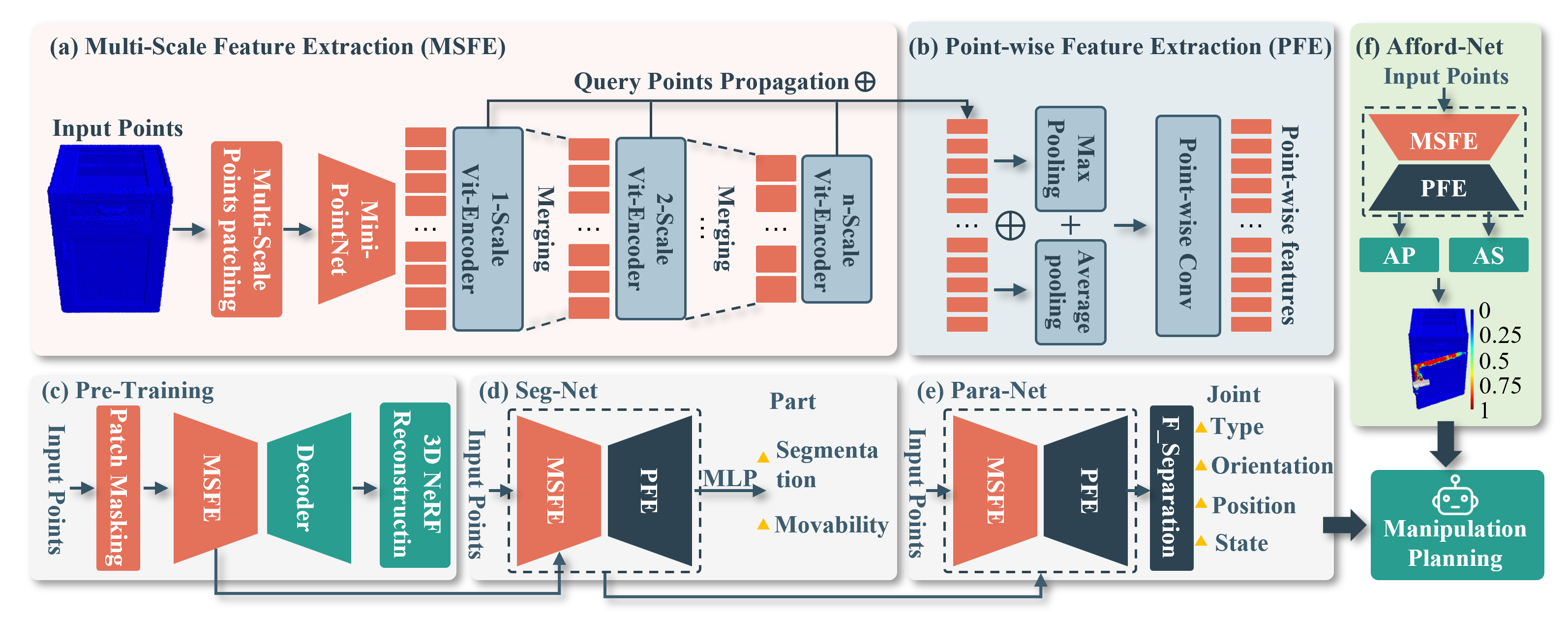

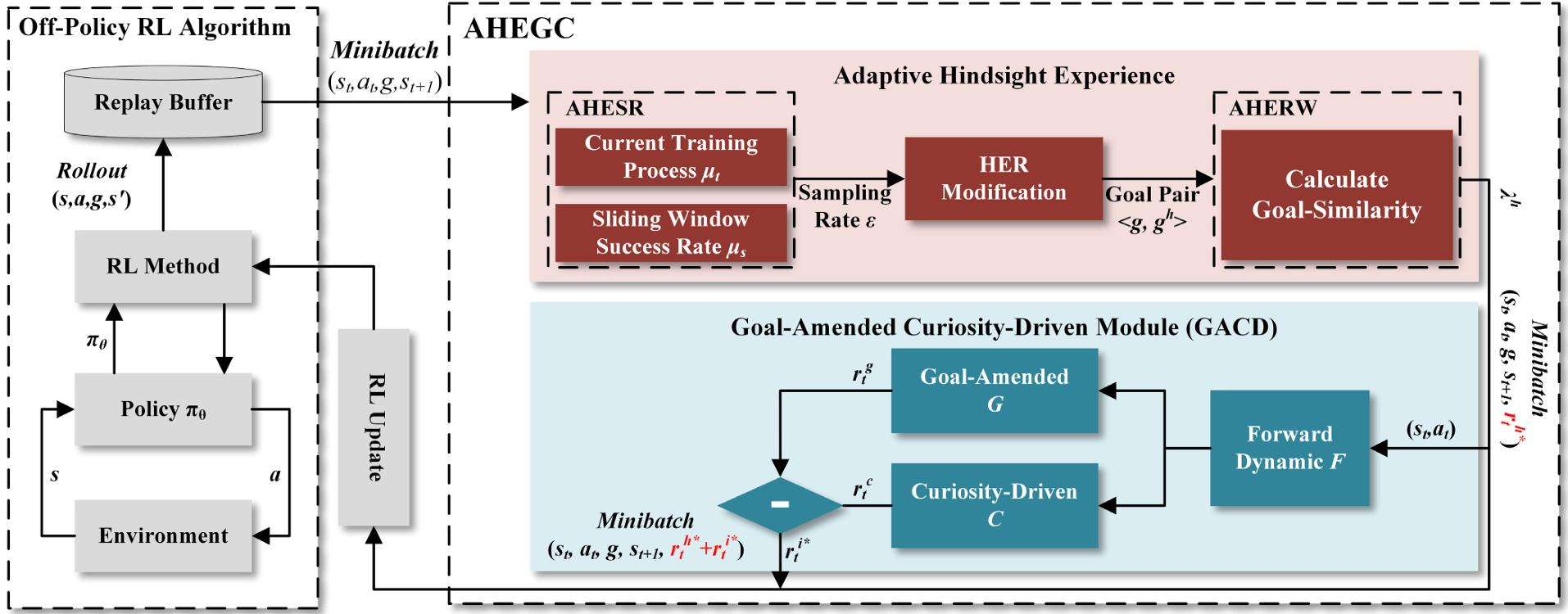

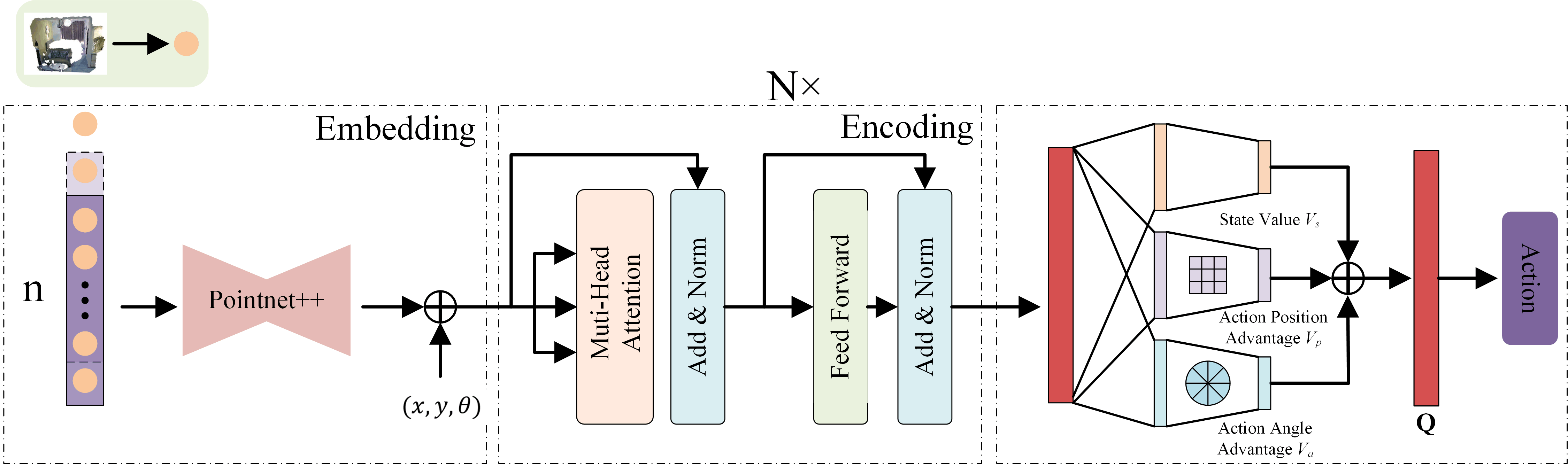

Generalized Manipulation of Articulated Objects Using Pre-trained Model. Leverages pre-trained visual models for generalizable robotic manipulation of articulated objects.

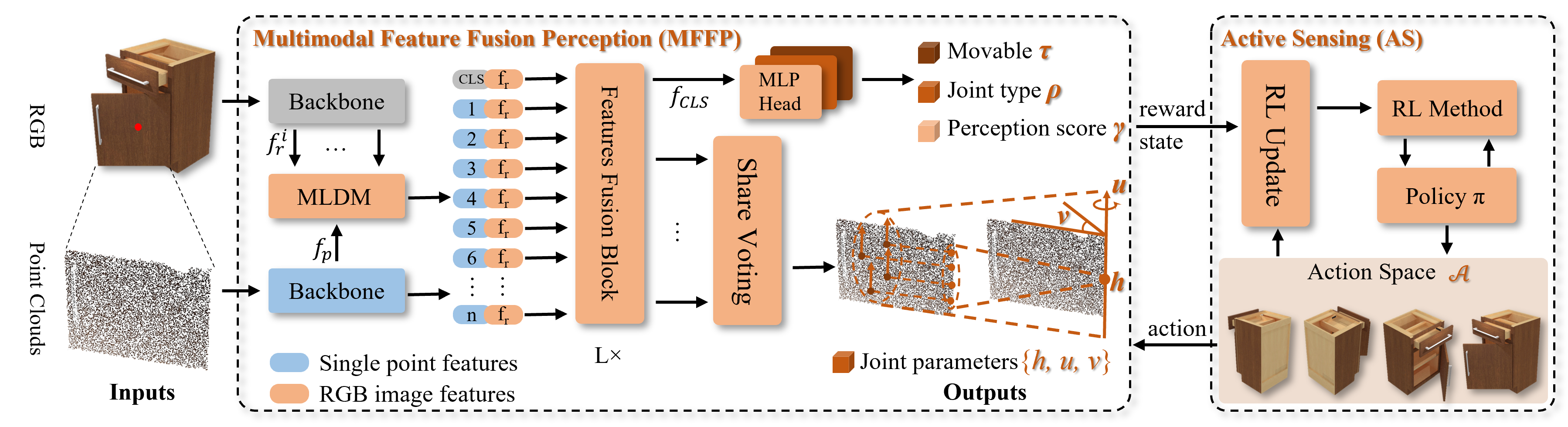

Multimodal Active Robotic Sensing for Articulated Characterization. A framework that enables robots to actively perceive and characterize articulated objects through multimodal sensing.

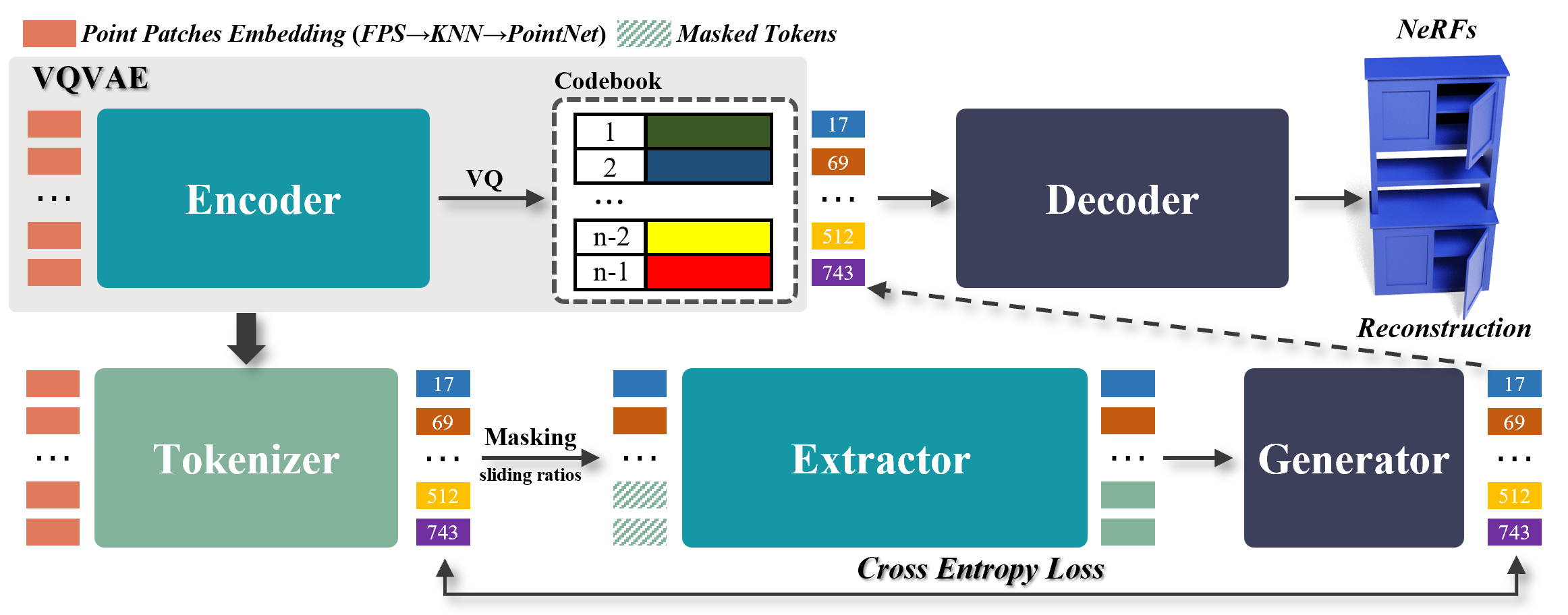

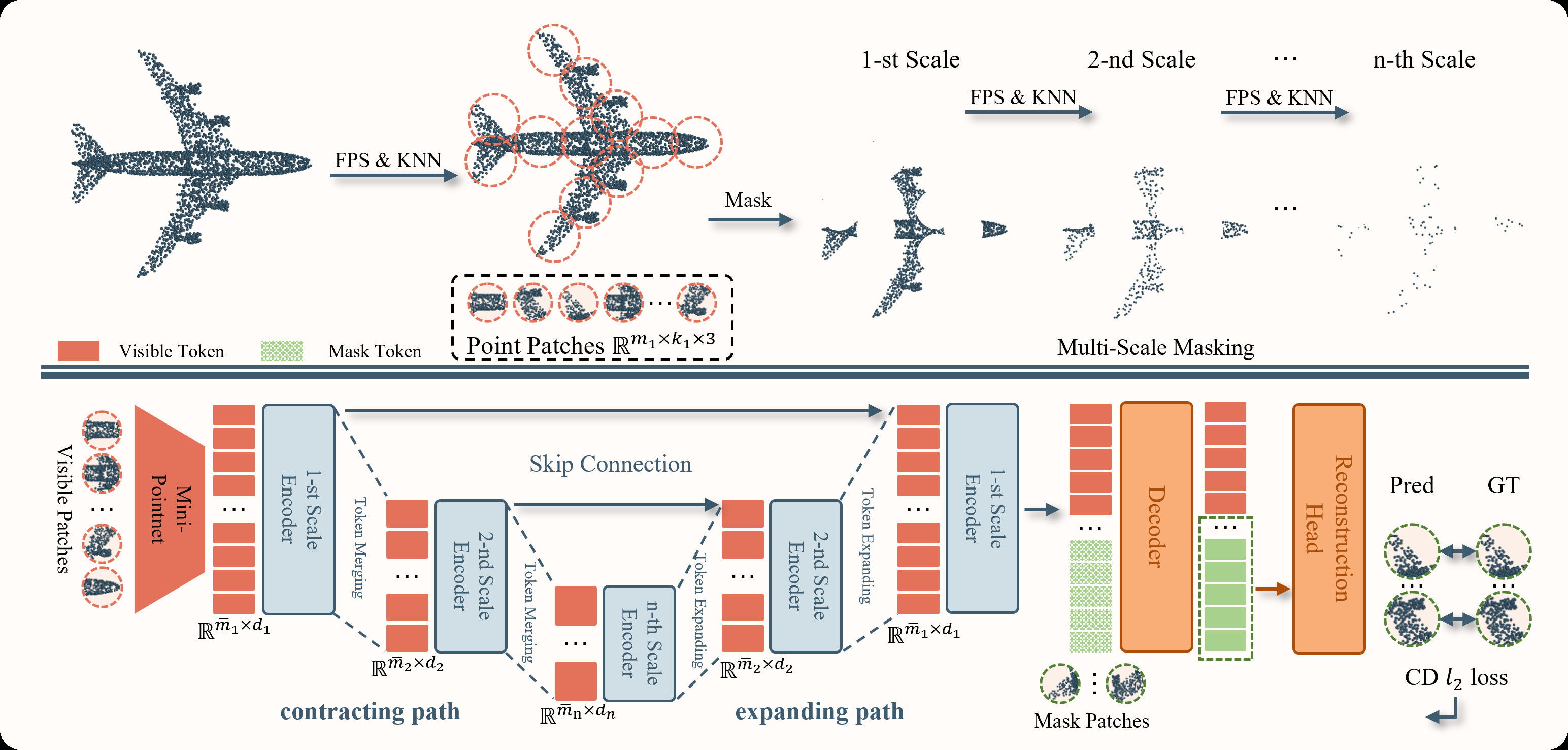

Masked Generative Extractor for Synergistic Representation and 3D Generation of Point Clouds. A joint framework combining point cloud representation learning with 3D shape generation.

FAEMTrack: Feature-Augmented Embedding and Cross-Drone Fusion for Single Object Tracking

IEEE Internet of Things Journal, 2025

Multi-objective Neural Architecture Search Combining Binary Artificial Bee Colony Algorithm for Dynamic Hand Gesture Recognition

Expert Systems with Applications, 2025

Reinforcement Learning-Driven Dual Neighborhood Structure Artificial Bee Colony Algorithm for Continuous Optimization Problem

Applied Soft Computing, 2024